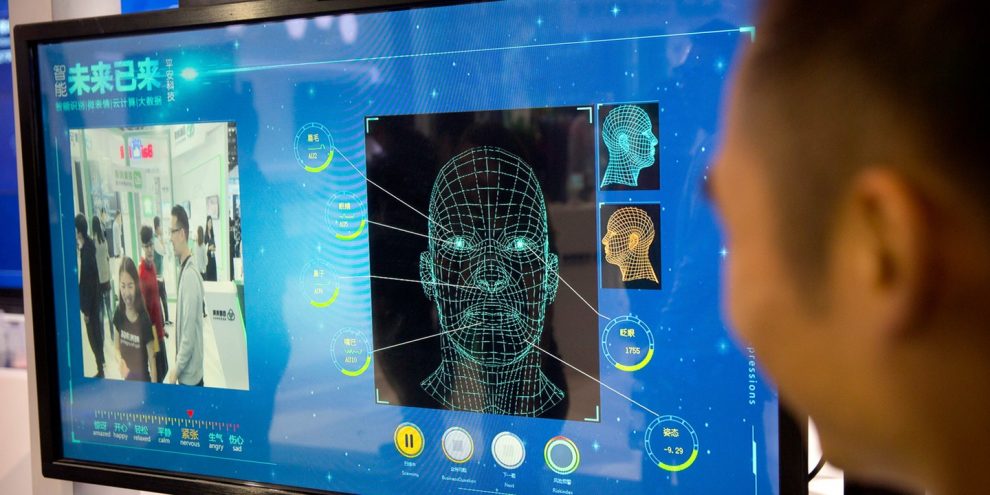

American psychologist Paul Ekman’s research on facial expressions spawned a whole new career of human lie detectors more than four decades ago. Artificial intelligence could soon take their jobs.

While the U.S. has pioneered the use of automated technologies to reveal the hidden emotions and reactions of suspects, the technique is still nascent and a whole flock of entrepreneurial ventures are working to make it more efficient and less prone to false signals.

Facesoft, a U.K. start-up, says it has built a database of 300 million images of faces, some of which have been created by an AI system modeled on the human brain, The Times reported. The system built by the company can identify emotions like anger, fear and surprise based on micro-expressions which are often invisible to the casual observer.

“If someone smiles insincerely, their mouth may smile, but the smile doesn’t reach their eyes — micro-expressions are more subtle than that and quicker,” co-founder and Chief Executive Officer Allan Ponniah, who’s also a plastic and reconstructive surgeon in London, told the newspaper.

Facesoft has approached police in Mumbai about using the system for monitoring crowds to detect the evolving mob dynamics, Ponniah said. It has also touted its product to police forces in the U.K.

Judicial turf war ignites over DOJ investigations into transgender drugs and surgeries for children

Top takeaways from the primary elections in Maine and South Carolina: ‘Movement about us’

Judge blocks Alabama’s nitrogen gas execution method, rules it is unconstitutionally cruel

Trump-endorsed candidate will face top GOP target in Nevada House district

Gaming-world veteran who ripped ‘woke’ culture scores Trump-backed battleground primary win

Top GOP target Dina Titus fends off House primary challengers

BREAKING: Karmelo Anthony Prison Sentence Announced, Roughly 4 Hours After Guilty Verdict Read

Trump administration to offer ‘premium’ expedited visa interviews for $750

Billionare Tom Steyer ends California governor campaign after falling short in Jungle Primary

Nick Reiner, Charged with Murdering His Parents, Demands Access to $1.5 Million Inheritance

GOP Finally Defeat Democrats, Pass Bill to Fund ICE, Border Patrol for Years to Come

The Left Has the Most Predictable Reaction to Knicks Losing NBA Finals Game in NYC: It’s Trump’s Fault!

Fact Check: Yes, Some American Students Can Carry Knives to School if They Are Sikhs

Watch: Clashes Flare Outside Courthouse After Karmelo Anthony Is Found Guilty

Trump-backed Hilton advances to California governor general election

The use of AI algorithms among police has stirred controversy recently. A research group whose members include Facebook Inc., Microsoft Corp., Alphabet Inc., Amazon.com Inc. and Apple Inc published a report in April stating that current algorithms aimed at helping police determine who should be granted bail, parole or probation, and which help judges make sentencing decisions, are potentially biased, opaque, and may not even work.

The Partnership on AI found that such systems are already in widespread use in the U.S. and were gaining a foothold in other countries too. It said it opposes any use of these systems.

Story cited here.