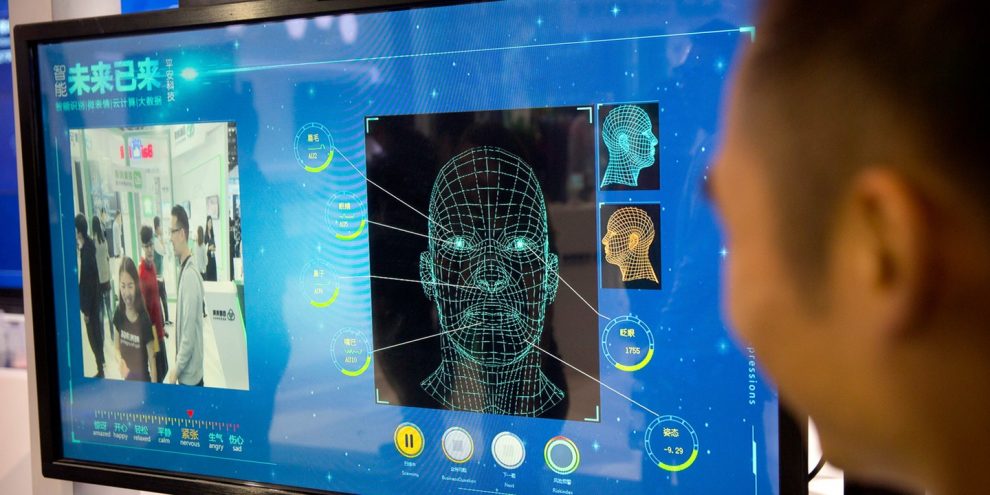

American psychologist Paul Ekman’s research on facial expressions spawned a whole new career of human lie detectors more than four decades ago. Artificial intelligence could soon take their jobs.

While the U.S. has pioneered the use of automated technologies to reveal the hidden emotions and reactions of suspects, the technique is still nascent and a whole flock of entrepreneurial ventures are working to make it more efficient and less prone to false signals.

Facesoft, a U.K. start-up, says it has built a database of 300 million images of faces, some of which have been created by an AI system modeled on the human brain, The Times reported. The system built by the company can identify emotions like anger, fear and surprise based on micro-expressions which are often invisible to the casual observer.

“If someone smiles insincerely, their mouth may smile, but the smile doesn’t reach their eyes — micro-expressions are more subtle than that and quicker,” co-founder and Chief Executive Officer Allan Ponniah, who’s also a plastic and reconstructive surgeon in London, told the newspaper.

Facesoft has approached police in Mumbai about using the system for monitoring crowds to detect the evolving mob dynamics, Ponniah said. It has also touted its product to police forces in the U.K.

Florida beach toll booth worker killed after driver rams structure before getting stuck in sand, sheriff says

Jared Kushner’s overseas luxury resort project faces anti-corruption investigation amid violent protests

Senate Democrats offer little support as Platner faces new sexting controversy: ‘Staying out of it’

Texas teens accused of using dating apps to lure young men into violent robberies that left one victim shot

Meet the Left’s Merchant of Hate

The Truth About Genocide in America: What North American Indians Were Doing to Each Other When Europeans Arrived

Trump administration names Rosario ‘Pete’ Vasquez to serve as next US Border Patrol chief

6 Years Ago This Week: Trump Rushed to WH Bunker, 60 Secret Service Members Injured During Leftist Riots

America Will Turn 250 in July as a Deeply Divided Country, But Our Spirit Remains Unbroken

Louisiana Passes Key Bill Letting Churches Protect Themselves from Leftist Protesters

The next frontier: Washington grapples with its latest space oddity

Soros-backed nonprofit accuses NJ Gov Sherrill of spreading ‘MAGA propaganda’ on ICE detainees

Fox News Poll: ‘Resilient discontent’ defines the US mood at 250th anniversary

Like Clockwork: MLB Annoys Fans After Going Gay Again for ‘Pride’ Month

Taxpayer-funded ‘Meth Mansion’ under fire as crime concerns mount around homeless campus

The use of AI algorithms among police has stirred controversy recently. A research group whose members include Facebook Inc., Microsoft Corp., Alphabet Inc., Amazon.com Inc. and Apple Inc published a report in April stating that current algorithms aimed at helping police determine who should be granted bail, parole or probation, and which help judges make sentencing decisions, are potentially biased, opaque, and may not even work.

The Partnership on AI found that such systems are already in widespread use in the U.S. and were gaining a foothold in other countries too. It said it opposes any use of these systems.

Story cited here.